Recall and Precision

Static code analysis is an analysis of source or binary code without execution in order to detect potential bugs and vulnerabilities. Weaknesses in the code can be identified and precisely localised. Static code analysis tools scan the entire code and find vulnerabilities -even if they only exist in the hindmost corners of the application. As static code analysis does not need to execute the code, it can be used early on in the development process, thus finding defects early, reducing the cost to fix them.Nonetheless, automated static analysis tools have also a disadvantage: they produce false positives and false negatives.

What does this mean?

Error messages can be classified as follows:

- True Positive - a real, actual error is reported.

- False Positive - an error, which in reality is not one, was reported.

- False Negative - an error was “overlooked” during analysis and thus was not displayed.

- True Negative - – a non-existent error is not reported – the tool does not trigger a “false alarm”.

The quality of static code analysis tools is i.a. determined by Recall and Precision.

Recall and Precision are being determined based on the ratios between True Positives, False Positives and False Negatives:

Recall = number of True Positives / (number of True Positives + number of False Negatives)

Precision = number of True Positives / (number of True Positives + number of False Positives)

The perfect tool would have perfect Recall (Value 1, i.e. no False Negatives) and perfect Precsision (Value 1, i.e. no False Positives).

At the moment, this cannot be achieved with the tools currently available on the market.

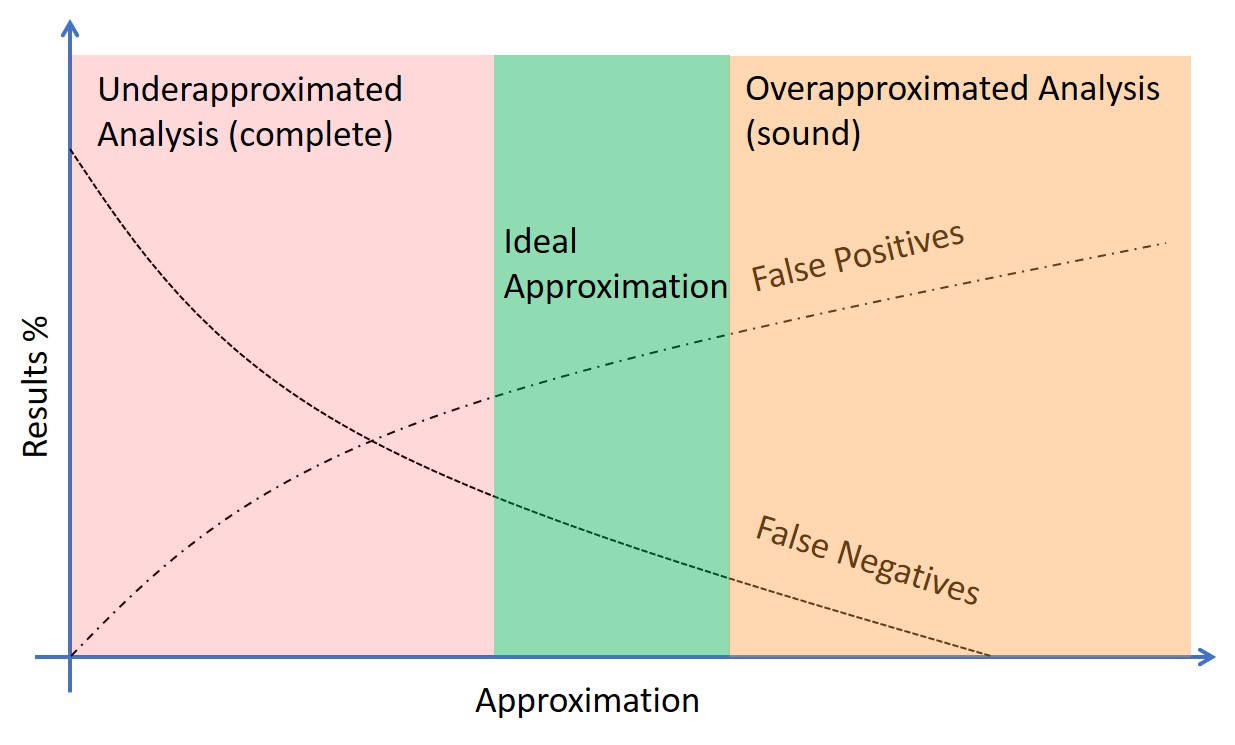

Complete vs. Sound Analysis

However, there are analysis tools which come close to an optimal analysis.Underapproximated analyses are complete: they report few if any False Positives, however the same time show a high number of False Negatives. (Nearly) all errors which are reported are real, actual errors, though a high number of errors will remain undetected.

Overapproximated analyses have a small number of False Negatives whilst reporting a high number of False Positives. (Nearly) all errors are being detected, however there are many “false alarms” which need to be reviewed.

In practice the best “compromise” is somewhere in the middle of both: relatively few False Positives and relatively few False Negatives. Only few mistakes are overlooked – with only a small amount of “false alarms”.

CodeSecure's CodeSonar offers such analysis.